“Principles for Tapping Higher Cognitive Levels of Learning through Constructed- Response Items”10/2/2016 I think we can all look back at our past education whether elementary, high school or even at the Undergraduate level and realise that many of our examination (i.e. multiple choice questions, short answer questions, and essays) were inherently bias. It can be also argued that they perhaps did not follow the basic guidelines for developing constructed-response items, as discussed by Gareis and Grant in chapter 5 titled, “How do I Create a Good Constructed-Response Item?” In this chapter, the authors present the principles guidelines of a well-constructed response item.

The three guidelines are as follows:

While most of these guidelines appear to be clear, and straightforward, you would be surprised to learn that many teacher and professors do not follow these three simple steps. For example, something as simple as “avoid option within the problem” is easily neglected in most University Level examinations. To further expan on this idea, I am going to draw from my own personal experiences as a student. During my Undergraduate Degree, for every English essay I have ever wrote, the professor would always provide at least ten ideas as essay topics. Now, don’t get me wrong, I always enjoyed looking through the plethora of ideas until I found the one that sparked my curiosity. Or let’s be honest, the topic I knew I could write a killer essay about. I believe that this was the thought process that motivated many students to choose a certain topic. But, herein lies the problem. The professor failed to see if each of his or her students fully grasped the intended learning outcomes. To be brief, simply because I was able to write an Expose of Margaret Atwood’s The Journals of Susanna Moodie, did not necessarily mean that understood how to compare Leacock’s Sunshine Sketches of Little Towns to Sinclair Ross’s As for me and my House. So, how can I integrate my knowledge of these principles into future summative assessments? As a summative assessment for my unit plan, I am having students develop a Career Plan Portfolio. The career plan will include items such as a cover letter, resume, goals, and the requirements to achieve these goals etc. For each aspect of the portfolio, students will be given a template, success criteria, and the desired outcome will be clear to students. To ensure clarity, I will model the desired outcome for each component of the portfolio. I think the main problem I risk encountering is that the assignment is to open, and this can be ambiguous for some students. To solve this problem, I think I can provide students with clear framing questions and prompts, as indicated in the chapter. I will also ensure that the scoring criteria is clear by providing students with a checklist. Gareis, C. R., & Grant, L. W. (2015). Teacher-made assessments: How to connect curriculum, instruction, and student learning. NY: Routledge.

0 Comments

Every time I am asked: “What is the best lesson you have ever taught?” I always go back to my Animal Adaptation Lesson titled: Extreme Habitats means Extreme Adaptations. This lesson was part of the Habitat and Community Strand in the Science and Technology curriculum. For this lesson, I thought it would be interesting to show the students different animal adaptations, rather than just tell the students about them. This was an extremely interactive lesson, and although chaotic, the students really enjoyed learning about the different types of adaptations through experimentation.

To facilitate learning, I divided the class into three stations. In the first station entitled: Brilliant Bird Beaks, students were encouraged to look at a series of different bird pictures. From the pictures, students were able to identify several different types of birds, each having different beaks, coloration and body shapes. By putting different tools to the test, students were able to identify which tool corresponded to the appropriate bird beak (i.e. medicine dropper corresponds to a hummingbird.) In the second station, entitled: Desert Plants, students learned how a cactus is able to survive in the extreme hot and dry climate of the desert. In the final science station, entitled: Polar Bear Blubber, students learned how polar bears are able to swim in the Arctic Ocean without freezing. By using a “blubber glove” students dipped their hand in cold water and recorded their findings. There was also a Free Station that was used for students to reflect upon their learning, ask questions, and take a few brief minutes to complete their question sheet. This activity was created for a split grade 3/4 class, but, for the purpose of this ticket in the door, I decided to use a specific expectation from the grade 4 science curriculum. Specific Expectation: 3.7 Describe structural adaptations that allows plants and animals to survive in specific habitats.

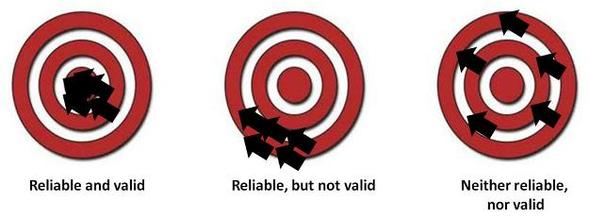

Personally, thinking through expectations in terms of these three contents is beneficial because it allows the teacher to understand the intended learning outcome, the skills that students should have to engage in the learning outcome, and finally the material required. While looking at the three different content areas of each specific expectation can be cumbersome, it does allow the teacher to understand each part of the expectation, which will ensure a more reliable and valid assessment. Reference: Gareis, C. R., & Grant, L. W. (2008). Teacher-made assessments: How to connect curriculum, instruction, and student learning. Larchmont, NY: Eye On Education  In education, there seems to be a certain emphasis placed on reliability and validity in assessment. But, how can we ensure that our assessment methods are both valid and reliable? As argued by Gareis and Grant, in chapter 2, reliability and validity work hand-in hand and are especially important when assessing students. To provide a clear picture, let's begin by defining both terms. As indicated in the chapter, validity refers to the extent in which a test, quiz or project measures what it is supposed to measure. In other terms, you are not having students solve an algebra problem to measure how motivated they are. On the other hand, reliability refers to the extent in which a quiz, test or project provides consistent result. In this context, whether given in the morning, afternoon, or night, the scores on the test or exam are consistent. To further expand on both concepts, the authors provide the metaphor of validity as an archery target and reliability as the results of the shots at the target. Visually, this metaphor is quite appealing because both concepts can be difficult to differentiate. From my understanding, the center of the target or the bull’s eye is a metaphor for the concept you are trying to measure. In this case, validity could be defined as a construct we are trying to define. For example, whether a test accurately assesses a student’s understanding of a mathematical concepts or a test that scores student’s intelligence. On the other hand, as previously mentioned, reliability refers to the extent in which a quiz, test or project provides consistent results. For example, a student might achieve consistent scores on their math homework, but the homework is not relevant to the day's math lesson. In this example, we see that the results are reliable, but are in fact not valid. So, in this case, we could take a look at the second figure in the diagram. The arrows are all clustered in the same general area, but completely missed the target. So, how do you achieve validity and reliability in the classroom? Well, I don't think there is any clear answer to this question, but as teachers, there are certain steps we can take to ensure that our assessment methods are validity and reliability. As maintained by Alias, it is important to begin my setting the objectives. He argues that by having a list of set objectives that your quiz, exam or test is trying to measure, you will be able to begin to gauge whether the test is valid or not. This idea makes me think about how providing students with reliable and valid assessment strategies plays a huge role in the classroom. I think that it is fair to say that assessment is like a science experiment. In order to ensure that the findings and conclusions are valid and reliable, we have to ensure that the proper protocols are taken at each step. Briefly, whatever the case, validity and reliability in our assessment strategies helps us maintain a certain level of accountability. References: Alias, M. (2005). Assessments of Learning Outcomes: Validity and Reliability of Classroom Tests. Retrieved September 25, 2016, from http://www.academia.edu/194058/Assessments_of_Learning_Outcomes_Validity_and_Reliability_of_Classroom_Tests Cassroom Assessment | Basic Concepts. (n.d.). Retrieved September 25, 2016, from http://fcit.usf.edu/assessment/basic/basicc.html J. (2014). Introduction to Reliability and Validity. Retrieved September 25, 2016, from https://www.youtube.com/watch?v=Yr817Iy5pfo |

|